On November 6, 2019, the Yale Center for Collaborative Arts and Media (CCAM) welcomed Senior User Experience Designer at the NASA Jet Propulsion Laboratory Marijke Jorritsma as part of their Wednesday Wisdom series. In attendance were a mix of artists, graphic designers, architects, musicians, STEM students, and professors all interested in some aspect of Jorritsma’s talk on how to create music/art with machine learning, ethics of designing for AI, and questioning whether or not machines are capable of being creative.

Jorritsma began the event by introducing herself and a project in which she at JPL Caltech in Pasadena, CA examined designing processes and software to support spacecraft operations for the Europa Clipper mission; a spacecraft set to arrive at the Jovian moon in 2025. She also did a project called OnSight: designing augmented reality tools for spacecraft engineering and Mars exploration. Protospace is a third project, a JPL-developed software package that uses the Microsoft HoloLens mixed-reality platform for rapid collaborative visualization of complex mechanical CAD models. In college she got interested in creating electronic music, and even now explores ways to use emerging technologies and science to build non-traditional electronic instruments and software. She realizes she and others are very dependent on hardware and software tools. It was a time of designing not just those algorithms but actually the surrounding products and experiences that accompany them. She is a member of the programming team for Ableton’s music, technology, and creativity conference “Loop”.

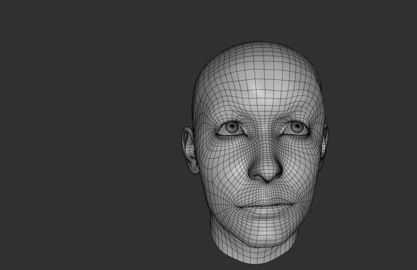

She then brought up a prototype of music software done by a current student at NYU, called Groove Pizzeria (pictured above). It is based on the idea of the rhythm necklace, a circular representation of musical rhythm. The Groove Pizza is a set of three concentric rhythm necklaces, each of which controls one drum sound, e.g. kick, snare and hi-hat. The Groove Pizzeria gives you two sets of concentric rhythm necklaces, to make polyrhythm and polymeter. Learning music through body, easier to remember the composition more quickly. Her only audience was the sensor. Exploring how designers and artists can frame machine intelligence in their work. Answered the question how best use data to create the next generation of user experiences.

She then mentioned a few Los Angeles based music groups she has talked to, including lucky dragons, an experimental music group consisting of Luke Fischbeck and Sarah Rara, and YACHT, a dance-pop bands that use artificial intelligence processes to help compose the songs from their latest album, Chain Tripping. Lucky dragons does live music, video projection, and sounds created in collaboration with the audience.

Within this segment, she discusses the appeal and of pop music, underscoring its formulaic nature, as well as peoples’ various responses to this music. She also notes ways in which machine learning, specifically synthesizers, can be incorporated into the process of creating pop music, and closes out with a discussion on artist Michelle Mogai (?), an artist who uses machine learning primarily in her performances.

Following this discussion, she summarizes the main points of her talk and opens up the conversation for questions from the audience.

Collaboration Questions:

Is it possible to translate a performance that is interpretive/improvisational to a machine?

How can we teach a computer to listen to sound?

What makes listening different from seeing? And how do we code listening?

What subjective processes occur in that space and or activity of coding?

How can we teach machine behaviors and machine learning while at the same time consider human biases and subjectivities?

What kind of listening are you using? Based on Pierre Schaeffer’s four modes of listening.

A recap by CCAM Temporary Experts Ernestina Hsieh and Cathryn Seibert. Photo by Aaron Peirano Garrison.